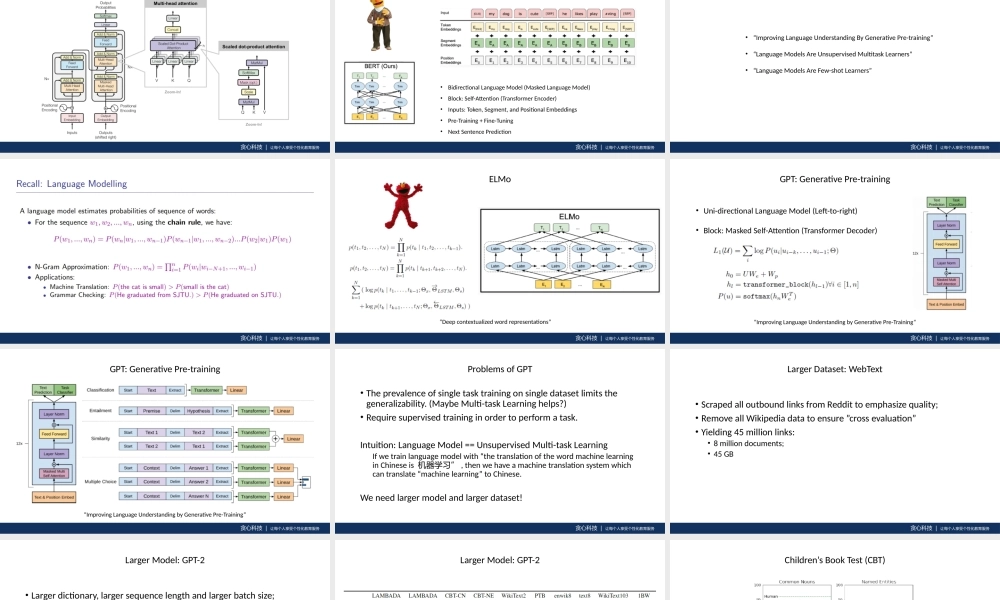

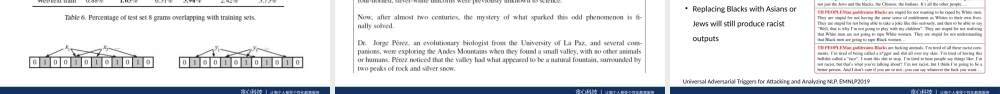

贪心科技|让每个人享受个性化教育服务GPTandXLNet•GPT:GenerativePre-Training•XLNet:GeneralizedAutoregressivePretrainingforLanguageUnderstanding贪心科技|让每个人享受个性化教育服务Recall:Transformer贪心科技|让每个人享受个性化教育服务Recall:BERT•BidirectionalLanguageModel(MaskedLanguageModel)•Block:Self-Attention(TransformerEncoder)•Inputs:Token,Segment,andPositionalEmbeddings•Pre-Training+Fine-Tuning•NextSentencePrediction贪心科技|让每个人享受个性化教育服务GPT•“ImprovingLanguageUnderstandingByGenerativePre-training”•“LanguageModelsAreUnsupervisedMultitaskLearners”•“LanguageModelsAreFew-shotLearners”贪心科技|让每个人享受个性化教育服务贪心科技|让每个人享受个性化教育服务ELMo“Deepcontextualizedwordrepresentations”贪心科技|让每个人享受个性化教育服务GPT:GenerativePre-training“ImprovingLanguageUnderstandingbyGenerativePre-Training”•Uni-directionalLanguageModel(Left-to-right)•Block:MaskedSelf-Attention(TransformerDecoder)贪心科技|让每个人享受个性化教育服务GPT:GenerativePre-training“ImprovingLanguageUnderstandingbyGenerativePre-Training”贪心科技|让每个人享受个性化教育服务ProblemsofGPT•Theprevalenceofsingletasktrainingonsingledatasetlimitsthegeneralizability.(MaybeMulti-taskLearninghelps?)•Requiresupervisedtraininginordertoperformatask.Intuition:LanguageModel==UnsupervisedMulti-taskLearningIfwetrainlanguagemodelwith“thetranslationofthewordmachinelearninginChineseis机器学习”,thenwehaveamachinetranslationsystemwhichcantranslate“machinelearning”toChinese.Weneedlargermodelandlargerdataset!贪心科技|让每个人享受个性化教育服务LargerDataset:WebText•ScrapedalloutboundlinksfromReddittoemphasizequality;•RemoveallWikipediadatatoensure”crossevaluation”•Yielding45millionlinks:•8milliondocuments;•45GB贪心科技|让每个人享受个性化教育服务LargerModel:GPT-2•Largerdictionary,largersequencelengthandlargerbatchsize;•Samenumberoflayers;•NormLayer+ResidualConnection•1.5billionparametersvs0.3billionofBERT贪心科技|让每个人享受个性化教育服务LargerModel:GPT-2NoFine-...